I recently asked a founder building a retail analytics product how long he expected his computer vision project to take.

He said three months. He’d been in development for eleven. The model was accurate in the lab. In the store, under fluorescent lighting with motion blur from shopping carts, it was hitting 61% accuracy.

His vendor hadn’t warned him once.

That’s the conversation I keep having. Not about algorithms or frameworks.

About the gap between a company that builds computer vision systems that work in demo conditions and one that builds systems that survive production.

I’ve been building technology products for 14 years. At EngineerBabu, the team has delivered 500+ products across 20+ countries, and in the last four years, AI-powered visual intelligence systems have become one of the deepest areas of focus.

When Simba Beer came to us with an inventory management problem across field operations spanning hundreds of outlets, the solution wasn’t an off-the-shelf detection model.

It was a custom-trained real-time field intelligence system that understood their specific SKUs, packaging variants, and lighting conditions in Indian retail environments.

That’s the gap most buyers don’t know to look for.

This guide is for CTOs and product leaders evaluating a computer vision app development company in the USA. I’m going to tell you what most blogs won’t.

What Is a Computer Vision App Development Company?

A computer vision app development company is a specialized software engineering firm that builds systems enabling machines to interpret, classify, and act on visual data.

This spans image recognition, object detection, video analytics, pose estimation, optical character recognition, and real-time inference at the edge or cloud.

The keyword here is “systems.” Any firm can run a pre-trained YOLO model on a clean dataset.

Building a production system that trains on new data, handles distribution shift, integrates into your existing infrastructure, and maintains SLA at scale, that’s a different discipline entirely.

The Market Context: Why This Matters Right Now

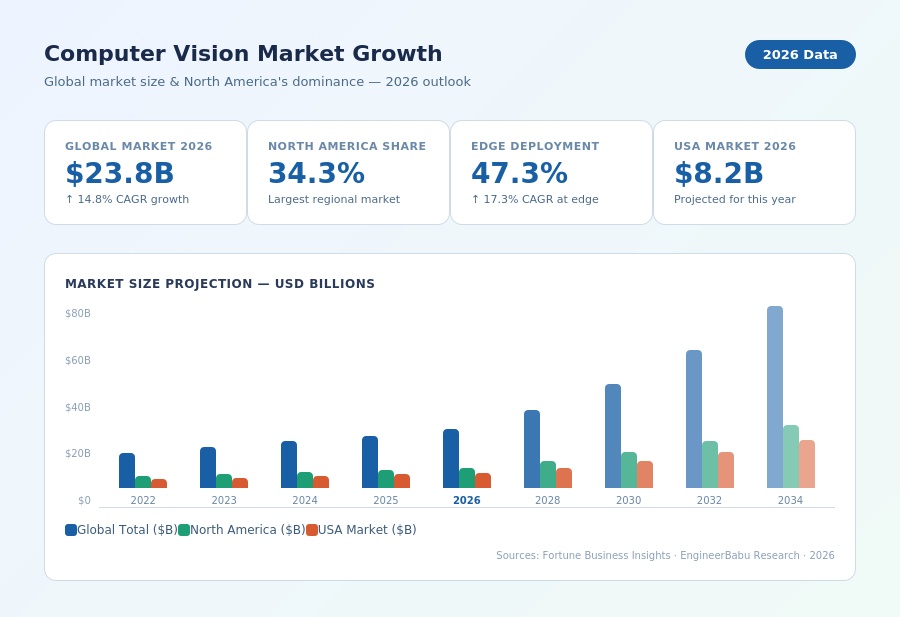

According to Fortune Business, The global computer vision market was valued at $20.75 billion in 2025 and is projected to reach $72.80 billion by 2034, growing at a CAGR of 14.80%.

North America holds 34.30% of that market. The USA alone accounts for $8.02 billion in 2025.

These aren’t abstract numbers.

They mean your competitors are already working on visual AI development in manufacturing quality control, healthcare diagnostics, retail analytics, logistics automation, and security. If you’re evaluating vendors now, you’re not early.

You’re catching up.

What Most Buyers Get Wrong When Evaluating Computer Vision Development Companies

Most CTOs I talk to underestimate the complexity of production computer vision by 3x to 4x. Here’s where the mismatches happen.

-

Confusing model accuracy with system reliability

A 94% accurate model in controlled testing can fall to 70% in the field when lighting changes, camera angles shift, or your object classes expand.

Accuracy is a benchmark, not a guarantee.

-

Treating data annotation as an afterthought

The single most time-consuming and expensive part of a custom vision project isn’t model architecture.

It’s data labeling.

For a medical imaging project, you need radiologist-quality annotation.

For a manufacturing defect detection system, you need domain experts to label subtle surface anomalies. Budget 30-40% of your total project cost here if you’re starting from scratch.

-

Underestimating edge deployment complexity

Edge inferencing, running models on a device rather than sending data to the cloud, is growing fast. It holds 47.33% of the deployment share today and is growing at a 17.29% CAGR.

But running a TensorRT-optimized model on an NVIDIA Jetson is not the same engineering problem as training one on a GPU cluster in AWS.

Different team. Different skill set.

-

Assuming one framework fits all

A vendor that defaults to TensorFlow for everything is showing you their comfort zone, not the best architecture for your use case.

Real-time detection at 30fps on a mobile device needs different choices than a batch processing pipeline analyzing satellite imagery overnight.

How to Actually Evaluate a Computer Vision App Development Company in the USA

1. Demand Production References, Not Portfolio Slides

Ask for references where their system has been live in production for at least 12 months.

Ask about model drift, retraining cycles, and what percentage of issues were caught before users reported them.

Any vendor who can’t answer these questions with specifics hasn’t done real production work.

2. Test Their Data Strategy Before Their Model Strategy

The first conversation with a serious computer vision company will be about your data: volume, quality, labeling budget, class imbalance, and edge cases.

If the first conversation is about which model they’ll use, leave. The model is determined by the data, not the other way around.

3. Understand Their MLOps Capabilitys]

Building the model is 40% of the job. The other 60% is CI/CD for ML development, model versioning, drift monitoring, retraining pipelines, A/B testing on model updates, and rollback capability.

Ask for a walkthrough of how they’ve handled a model update in a live production system. This question alone will separate most vendors.

4. Validate Industry-Specific Experience

Computer vision in healthcare diagnostics (HIPAA, FDA clearance, DICOM standards) is a completely different project from computer vision in retail inventory or autonomous vehicle perception.

Domain expertise accelerates development by 4-6 months and reduces compliance risk substantially. Ask for projects in your vertical specifically.

5. Clarify Post-Launch Ownership

Who owns model accuracy after go-live? Who detects when accuracy degrades? Who manages the retraining pipeline?

Most vendors deliver a model and walk away. The ones worth working with have explicit SLAs around inference accuracy, alerting on distribution shift, and defined retraining protocols.

Technical Architecture Decisions That Determine Project Success

When the EngineerBabu team approaches a computer vision project, the architecture conversation happens before the first line of code. These are the decisions that actually matter.

-

Cloud vs. Edge Deployment

Cloud inference (AWS Recognition, Google Vision AI, Azure Computer Vision) works well for non-latency-sensitive workloads with clean data pipelines.

Edge deployment is essential when you need sub-100ms latency, operate in low-connectivity environments, or have data sovereignty requirements that prevent sending visual data offsite.

For a surveillance-heavy use case, sending video streams to the cloud for every frame is economically indefensible and creates data compliance exposure.

A model running on an NVIDIA Jetson Orin at the device processes locally. The cloud only receives the event, not the raw footage.

-

Custom Model vs. Foundation Model Fine-Tuning

In 2025-2026, the calculus has shifted. For many standard tasks, fine-tuning a foundation model like SAM (Segment Anything Model), CLIP, or a Vision Transformer variant on your domain data outperforms a custom-built CNN at lower cost and faster time-to-value.

When do you still build custom?

When your visual domain is highly specialized (medical imaging, satellite imagery, specific industrial defect classes)

When you have inference latency requirements that foundation models can’t meet on target hardware, or when you need full model ownership without third-party dependencies.

-

Real-Time vs. Batch Processing Architecture

Real-time systems require GPU-accelerated inference servers, low-latency data pipelines, and frame-level processing decisions in under 50ms.

Batch systems can use cheaper infrastructure and prioritize throughput over latency.

Building a real-time architecture when the use case only needs batch processing wastes 40-60% of infrastructure budget. I’ve seen this mistake made in both directions.

-

Data Pipeline Design

The model is only as good as the data feeding it. A production computer vision system needs:

- Consistent image preprocessing (normalization, resizing, augmentation at inference time)

- Versioned datasets with annotation audit trails

- Automated quality filtering to reject corrupted or out-of-distribution inputs before inference

- Feedback loops to capture failure cases for retraining

- Drift detection that flags when incoming data distribution diverges from training data

Skipping even two of these creates technical debt that becomes very expensive at scale.

The Real Cost of Computer Vision App Development

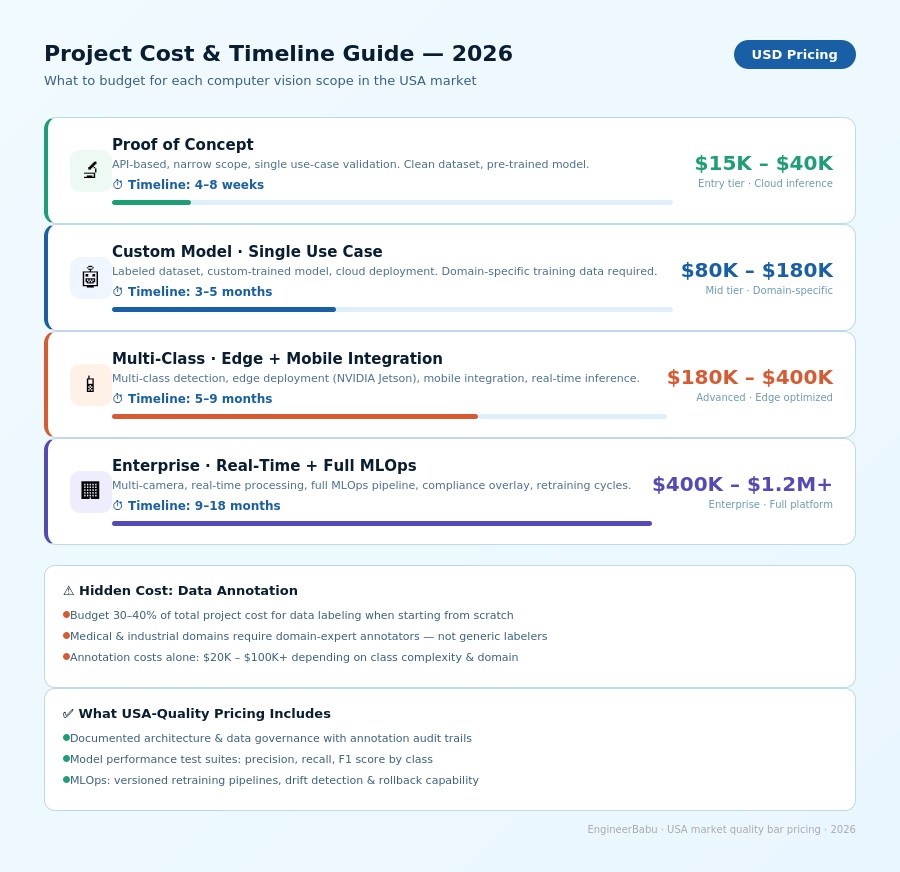

Most blogs give you ranges so wide they’re useless. Here’s a more direct breakdown

| Project Type | Timeline | Approximate Cost (USD) |

| Proof of Concept (API development, narrow scope) | 4-8 weeks | $15,000 – $40,000 |

| Custom model, single use case, cloud deployment | 3-5 months | $80,000 – $180,000 |

| Multi-class detection, edge deployment, mobile integration | 5-9 months | $180,000 – $400,000 |

| Enterprise-grade, real-time, multi-camera, MLOps included | 9-18 months | $400,000 – $1,200,000+ |

These ranges assume a US-market quality bar: documented architecture, data governance, test suites for model performance, and proper MLOps.

If a vendor is quoting substantially below these numbers for complex use cases, ask what’s being cut.

The most expensive line item most buyers don’t anticipate: dataset creation.

If you don’t have labeled training data, expect $20,000-$100,000+ in annotation costs for a production-grade custom model, depending on the domain and class complexity.

Industries Where Computer Vision Is Actually Being Deployed Right Now

I keep tabs on what’s shipping, not just what’s being announced. The real adoption is happening here.

-

Manufacturing

Automated defect detection in quality control lines. S

ystems running at 200+ frames per second identifying surface anomalies that human inspectors miss. Manufacturing leads the market with 28.49% share in 2025.

-

Healthcare

Medical imaging analysis in radiology, pathology, and dermatology.

The compliance requirements (HIPAA, FDA 510(k) clearance for diagnostic tools) make this one of the hardest domains to build in, which is also why it’s underserved by most vendors.

-

Retail and CPG

Planogram compliance, inventory tracking, customer behavior analytics in physical stores.

The Simba Beer system the EngineerBabu team built falls here. Real-time field intelligence for 200+ outlets, processing SKU-level detection data from field agent devices.

-

Logistics and warehousing

Package identification, barcode-free scanning, damage detection at receiving docks, autonomous warehouse robot guidance.

-

Security and surveillance

Perimeter monitoring, anomaly detection, and access control via facial recognition. CCPA and GDPR compliance adds 6-12 weeks to any US market deployment.

-

Automotive

ADAS systems. Global ADAS camera shipments are expected to reach 240 million in 2026, up from 200 million in 2025. This segment is growing at 18.23% CAGR.

What Separates Great Computer Vision Partners from Good Ones

This is the part that competitors writing generic content can’t replicate, because they haven’t done the work.

1. They push back on your requirements

When a client tells me they want 99% accuracy, I ask them to define accuracy. Precision? Recall? F1 score? At what confidence threshold? For what class? These aren’t pedantic questions.

A system optimized for high recall catches everything but produces more false positives. A system optimized for high precision produces more false negatives. In a medical context, false negatives can kill people.

In a retail context, false positives just annoy staff. The tradeoffs are completely different. A vendor that says “sure, 99% accuracy, no problem” is lying to you.

2. They model the failure modes before they model the use case

What happens when the camera gets dirty? What happens when lighting changes between training and deployment? What happens when a new product SKU appears that the model has never seen?

Strong teams build failure mode analysis into the requirements phase. Weak teams discover failure modes in production.

3. They have an opinion on your stack

A vendor that says “we work with whatever you have” with no further guidance is not a technical partner. When the EngineerBabu team takes on a computer vision project, there’s a point of view on whether PyTorch vs.

TensorFlow matters for the specific use case, whether ONNX runtime is the right choice for the target hardware, whether you need a vector database for image embeddings or whether a traditional database with a well-designed schema is sufficient.

That opinionation is a signal that someone has thought hard about your specific problem.

4. They’ve been burned and learned

Ask what their biggest computer vision project failure was and what they changed because of it. Anyone who says they haven’t failed isn’t doing interesting work.

The EngineerBabu team has deployed models that needed emergency rollbacks after production distribution shift. We learned to build automated drift detection and canary releases into every production system because of those experiences.

The Vendor Evaluation Framework

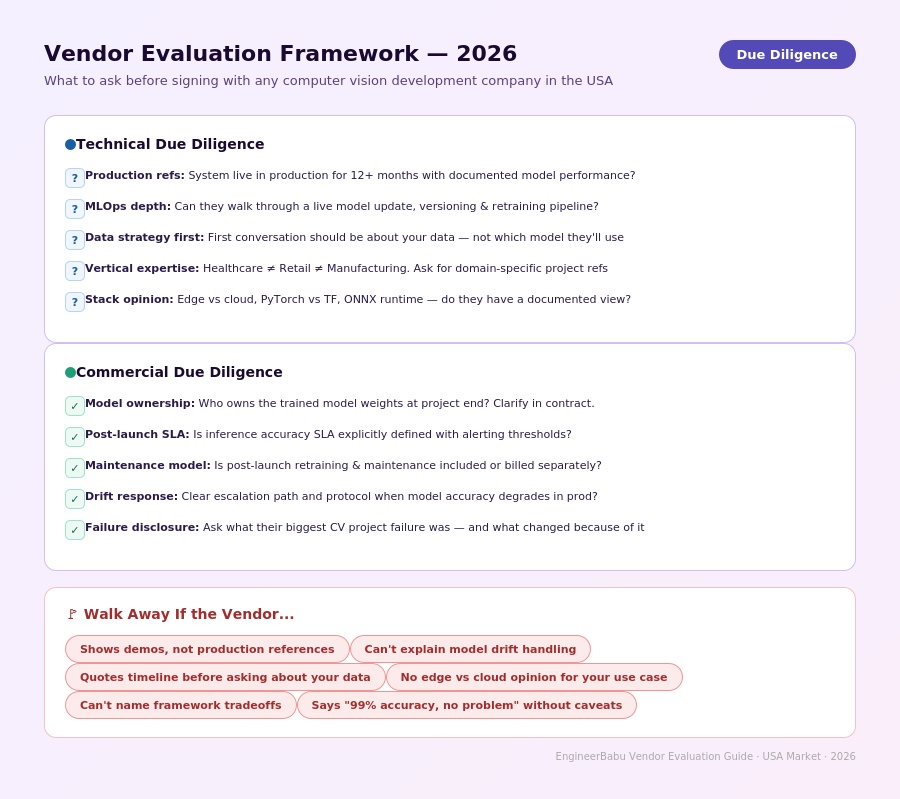

Before signing anything with a computer vision app development company in the USA, run through this.

Technical due diligence:

- Can they show a production system with documented model performance over 12+ months?

- Do they have MLOps experience? Can they describe their retraining pipeline?

- What’s their approach to data governance and annotation quality control?

- Have they deployed in your specific vertical? Ask for the hardest compliance or accuracy challenge in that project.

Commercial due diligence:

- Who owns the trained model weights at project end?

- What’s the SLA on inference accuracy post-launch?

- Is post-launch model maintenance included, or is it time and materials?

- What’s the escalation path when accuracy degrades?

Red flags:

- Can only show demo videos, not production references

- No specific answer on how they handle model drift

- Quotes timelines without asking about your data situation first

- No point of view on edge vs. cloud for your use case

- Can’t name the framework trade-offs for your specific application

Why EngineerBabu Works on Computer Vision Projects Differently

EngineerBabu is a CMMI Level 5 certified product engineering company recognized by Google AI Accelerator (Top 20 globally, 2024), NASSCOM, and LinkedIn Top 20 Startups India. The company takes 20 projects per year. That’s intentional.

Twenty projects means every engagement gets my direct attention, architecture review, and judgment calls.

No account manager is insulating you from the people actually building your product.

The computer vision work sits on top of 500+ products delivered across 20+ countries, including 75 YC-selected builds and 200+ VC-funded products. The Simba Beer AI inventory management system is one example. The team didn’t just build a model. The team built a system.

That’s the distinction that matters when you’re evaluating a computer vision app development company in the USA.

FAQ

1. How long does it take to build a production-ready computer vision application?

A scoped, single-use-case custom vision model with cloud deployment typically takes 3-5 months. Multi-class, edge-deployed, real-time systems take 5-9 months.

Enterprise platforms with full MLOps, retraining pipelines, and compliance overlays are 9-18 months.

Timeline starts from finalized, labeled training data, which itself can take 4-12 weeks to prepare.

2. What’s the difference between using a pre-trained model API and building a custom computer vision model?

Pre-trained APIs (Google Vision AI, AWS Rekognition, Azure Computer Vision) cover common object classes at low development cost and fast time-to-value.

They fail when your visual domain is specialized, your accuracy requirements are strict, your data can’t leave your environment, or you need inference at the edge.

Custom models are justified when the use case requires domain-specific training data and the business value justifies the higher development cost.

3. What industries use computer vision the most in the USA right now?

Manufacturing (28.49% of market share), healthcare, retail analytics, logistics, automotive ADAS, and security surveillance are the leading verticals.

Manufacturing and automotive are growing fastest.

Healthcare is the most technically demanding due to FDA and HIPAA compliance requirements.

4. How do I evaluate whether a computer vision development company has real production experience?

Ask for a production reference where their system has been live for 12+ months. Ask how they handle model drift. Ask for their retraining protocol.

Ask what the hardest failure mode was in that project and how they resolved it. Any vendor who struggles to answer these has done proof-of-concept work, not production engineering.

5. What should I look for in a computer vision company’s technical stack?

Modern frameworks like PyTorch, ONNX runtime for cross-platform inference, YOLO variants or Vision Transformers for detection, MLflow or similar for experiment tracking, and a defined MLOps pipeline for model versioning and deployment.

Edge capability (TensorRT, OpenVINO, CoreML)

if your use case requires it. The specific tools matter less than evidence that they’ve made deliberate choices for documented reasons.

Work With EngineerBabu on Your Computer Vision Project

If you’re evaluating a computer vision app development company in the USA and want to have a direct conversation about the architecture decisions before you commit to anything, I’m personally on those calls.

Not a pre-sales engineer. Not an account manager. Me.

We take 20 projects a year for a reason. If your use case is interesting and the scope is real,

I’d rather spend 45 minutes on a call making sure we’re aligned on data strategy, deployment environment, and accuracy expectations than discover the misalignment three months into development.

Mayank Pratap Co-founder, EngineerBabu 14 years building technology products. Google AI Accelerator Top 20, 2024. CMMI Level 5 Certified. 500+ products delivered across 20+ countries.