Last month, I got on a call with a founder who had already spent ₹28 lakhs with an AI chatbot development company in India.

The bot was live. It answered 12 question types. It broke on the 13th. Their support team was still manually handling 74% of queries. The vendor called it a successful deployment.

That conversation is not rare. I have versions of it every few weeks.

I have been building technology products for 14 years, and the EngineerBabu team has shipped 500+ projects across 20+ countries.

We have built chatbot layers inside lending stacks, e-commerce engines, field intelligence platforms, and neobanks. I am not writing this to sell you something.

I am writing this because the pattern of failure is consistent enough that someone needs to say it plainly.

Here is everything I know about picking and working with the right AI chatbot development company in India.

What Is an AI Chatbot Development Company in India

An AI chatbot development company in India is a technology partner that designs, builds, trains, and deploys conversational AI systems integrated into your product, operations, or customer experience workflows.

The keyword is “integrated.” A chatbot that floats above your existing systems is not a product. It is a demo.

The best companies in this space do not just write NLP pipelines.

They map your conversation flows against your actual business logic, train intent detection on real user data, connect the bot to your CRM, ERP, ticketing systems, or payment gateways, and build fallback mechanisms that do not embarrass you when the model is uncertain.

That is the baseline. Everything else is differentiation.

The Size of What Is Actually Happening Here

The Chatbot market size is expected to grow from USD 9.30 billion in 2025 to USD 11.45 billion in 2026 and is forecast to reach USD 32.45 billion by 2031 at 23.15% CAGR over 2026-2031.

This is not a trend. This is infrastructure consolidation.

When Salesforce’s 2025 data shows that 30% of service cases are already being resolved by AI, the competitive dynamic has shifted. Not having a well-built chatbot is increasingly a structural disadvantage.

India sits at the center of this because of three things: engineering depth, cost structure, and domain diversity.

The 28-40% cost advantage India-based teams carry over Western markets makes it the default answer for any product team thinking seriously about custom AI development services.

What Most Companies Get Wrong Before They Even Hire a Vendor

I have reviewed the architecture of probably 40 chatbot projects in the last three years. Here is where they fall apart, consistently.

-

Confusing NLP complexity with product quality

Founders think the more sophisticated the language model, the better the chatbot. Not true.

A poorly scoped GPT-4 bot will fail worse than a well-scoped rule-hybrid because the failure modes are less predictable and harder to debug.

-

Skipping conversation design

Conversation design is the logic of how your chatbot moves from one state to another, how it handles ambiguity, what it does when confidence is low, and how it escalates to a human.

Most vendors treat it as an afterthought. It should be the first deliverable after requirements.

-

Underestimating integration depth

I talk to CTOs who budget ₹10-15 lakhs for the chatbot and then discover that CRM integration alone adds 6 weeks and another ₹8 lakhs.

The integrations are where the real value lives. A bot that cannot read your order history or customer tier has limited usefulness.

-

No feedback loop after launch

AI models drift. Intent detection that works on training data degrades over time as user language evolves.

The vendor hands you the bot. Nobody re-trains it. Eighteen months later, it is answering 40% of queries correctly, and the team has lost faith in the technology.

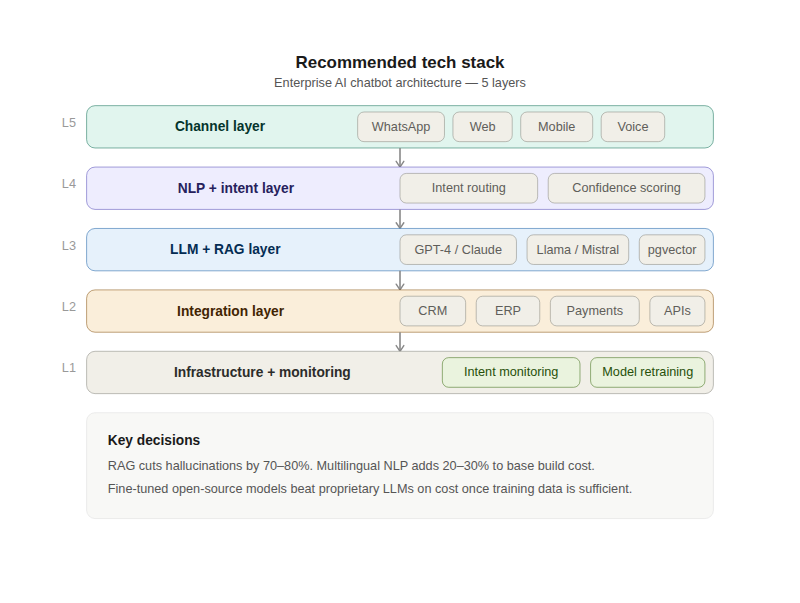

The Technical Stack Decisions That Actually Matter

This is the section most vendors skip because it requires them to form opinions, and opinions can be wrong.

1. LLM selection is not a prestige choice

GPT-4 is expensive per token. For high-volume customer support (say, 100,000 interactions per month), the inference cost alone will be ₹3-6 lakhs monthly, depending on conversation length.

Claude and Gemini are cheaper to start with and competitive on most support tasks.

For domain-specific use cases, a fine-tuned open-source model like Llama or Mistral on your own infrastructure will beat all of them on cost and often on accuracy, once you have enough training data.

2. RAG is not optional for enterprise deployments.

If your chatbot needs to answer questions from a knowledge base, product catalog, or internal documentation, you need a RAG pipeline.

This means a vector database (Pinecone, Weaviate, or pgvector), an embedding model, and a retrieval layer that pulls relevant context before the LLM generates a response.

Without it, your bot hallucinates. With it, hallucinations drop by 70-80% in my experience.

3. Intent detection and entity recognition still matter.

Even if you are using an LLM, you want a classification layer that routes conversations before they hit the generative model.

This reduces latency, controls cost, and gives you a clean fallback mechanism.

4. Multi-channel architecture from day one.

WhatsApp, web widget, mobile app, and voice are not the same deployment surface.

If you build for web only and then try to migrate to WhatsApp, you are rebuilding 40% of the conversation logic. Design the channel abstraction layer early.

5. Multilingual support adds 20-30% to base development cost

India has 22 recognized languages and real users in all of them. Hindi, Tamil, Bengali, Marathi, and Telugu combined reach 800+ million people.

If your market is not exclusively English-speaking urban professionals, budget for multilingual NLP from the start.

Real Project Reference: What EngineerBabu Built for Simba Beer

The EngineerBabu team built an AI-powered field intelligence layer for Simba Beer. The use case sounds simple: field reps needed real-time inventory insights. The execution was not.

The chatbot had to query live inventory data across distributors, understand unstructured field inputs and surface actionable recommendations without requiring the rep to navigate a dashboard.

We built a WhatsApp-native interface with a custom NLP layer trained on real field rep conversations.

The intent model handled 14 distinct query categories. We built a confidence threshold that routed uncertain inputs to a structured form rather than generating a bad answer.

Distributor data came in via API integration with 3 backend systems, with a caching layer to handle connectivity issues in low-bandwidth regions.

This took 4 months and 3 integration sprints. It could not have been done in 6 weeks.

Any vendor quoting 6 weeks for that scope was either lying about capabilities or planning to hand you a chatbot that handles 4 of those 14 query types.

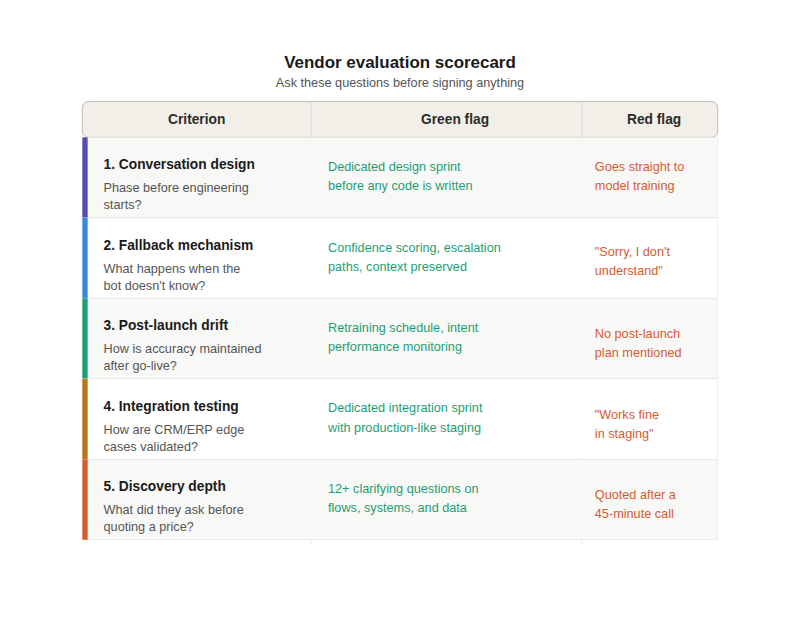

How to Evaluate an AI Chatbot Development Company in India: A Real Framework

Choosing the right partner requires evaluating technical expertise, industry experience, scalability, support quality, and pricing transparency.

The best chatbot development services should align with your business goals, integration needs, and long-term AI strategy.

1. Fallback mechanism from a previous project

Any serious team has thought hard about what happens when the bot does not know the answer. If they say “the bot says sorry, I don’t understand,” that is not an answer. Proper fallback logic involves confidence scoring, escalation paths, and context preservation.

2. Conversation design process

They should have a dedicated phase before engineering starts. If they go straight to model training, they have not thought about user flows.

3. Handling model drift

Post-launch. After the project is “done.” The answer should involve re-training schedules, intent performance monitoring, and a process for updating training data based on new conversations.

4. Integration testing methodology

CRM, ERP, and payment gateway integrations fail in production in ways they did not fail in staging. How do they test for this?

5. Conversation logs from a deployed project

Not the demo. The real logs. This shows you actual accuracy, actual failure modes, and how the team improved the model over time.

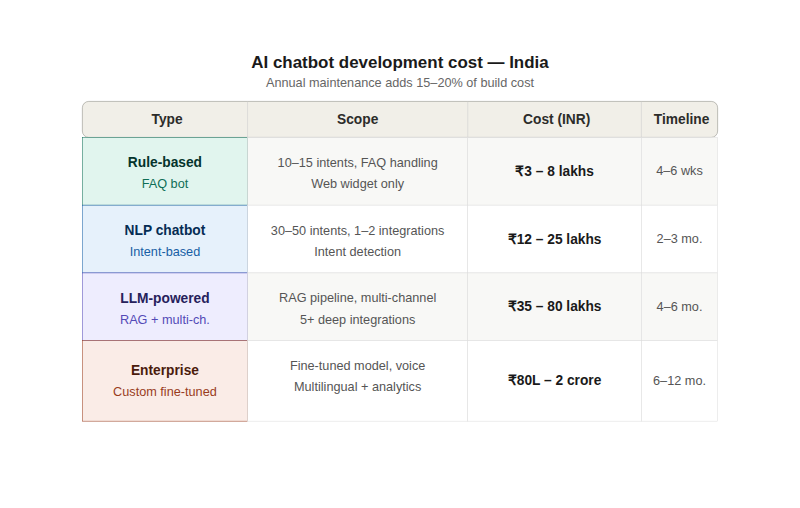

AI Chatbot Development Cost in India: Real Numbers

The ranges you will read elsewhere are almost useless without context. Here is how I would break it down.

| Type | Scope | Cost Range (INR) | Timeline |

| Rule-based bot | FAQ handling, 10-15 intents, web widget | ₹3-8 lakhs | 4-6 weeks |

| NLP chatbot | 30-50 intents, intent detection, 1-2 integrations | ₹12-25 lakhs | 2-3 months |

| LLM-powered bot | RAG pipeline, multi-channel, 5+ integrations | ₹35-80 lakhs | 4-6 months |

| Enterprise-grade | Custom fine-tuned model, voice, multilingual, analytics | ₹80 lakhs – ₹2 crore+ | 6-12 months |

Annual maintenance is typically 15-20% of initial build cost.

India-based teams carry a 28-40% cost advantage over Western markets on comparable scope. This is real. It is also overstated when vendors use it to justify under-scoped projects.

The Vendor Selection Mistake Nobody Talks About

Most teams evaluate vendors on portfolio and pricing. Those matter. But the single biggest predictor of project success is whether the vendor has done discovery before quoting.

A vendor who quotes ₹15 lakhs for your chatbot after a 45-minute call does not understand your problem.

A vendor who comes back with 12 clarifying questions about your conversation flows, your existing systems, your support ticket data, and your escalation logic before giving you a number is telling you something about how they work.

I take 20 projects a year. Every single one starts with a call where I am asking questions, not answering them.

The scope I quote after that call is one that I can stand behind because I understand what we are actually building.

Build vs Buy: When to Use an Off-the-Shelf Platform

Not everything needs custom development. Here is an honest matrix.

Use a platform (Intercom, Freshdesk, Tidio, etc.) if:

You have standard FAQ-style support needs, your query types number under 25, you do not need deep system integrations, and you want to be live in 2 weeks.

Build custom if:

You have domain-specific language that general NLP models do not handle well, you need to integrate with proprietary or legacy systems.

Your query volume is high enough that per-interaction pricing becomes expensive, or you need data sovereignty and cannot have your conversation data processed by a third-party cloud.

The inflection point in India is typically around 50,000 interactions per month. Below that, platforms win on economics. Above that, custom starts to justify itself on cost within 12-18 months.

What the Best AI Chatbot Projects in India Have in Common

After 500+ projects, the pattern on the successful ones is clear.

The client came in with real conversation data. Not hypothetical queries. Actual support tickets, WhatsApp messages, call transcripts, something the model could learn from.

The projects that started from scratch on training data took 2x longer to reach acceptable accuracy.

The scope was locked before development started.

Chatbot projects that allow scope creep mid-flight are catastrophically expensive to fix because every new intent type potentially requires retraining the model, not just adding a code path.

There was a named person on the client side with decision authority. Not a committee.

When the conversation design needs a call on how to handle a particular query type, that decision needs to take hours, not weeks.

Post-launch monitoring was budgeted from the beginning. The first 90 days after a chatbot launch are where you collect the most valuable production data.

If your team is not actively analyzing mishandled conversations and feeding corrections back into training, you are leaving accuracy on the table.

If You Are Evaluating AI Chatbot Development Companies in India Right Now

Most of the conversations I have start the same way. A founder or CTO has either been burned by a previous vendor or is looking at a quote that feels too clean to be real.

If you are at that point in your evaluation and want to talk through the architecture decisions, the integration scope, or the model selection trade-offs before you sign anything.

I am usually the one on those calls at EngineerBabu. No account manager, no sales team.

EngineerBabu is a CMMI Level 5 certified product engineering company, Google AI Accelerator top 20 globally in 2024, and a NASSCOM member.

We have delivered 500+ projects across 20+ countries. The founders I work with come from referrals, not ads. I would rather earn the right to build your product than win a competitive pitch.

FAQ

Q1. How long does it take to build an AI chatbot in India?

“Timelines range from 4-6 weeks for a rule-based FAQ bot to 4-6 months for an LLM-powered enterprise chatbot with RAG, multilingual support, and multiple system integrations, as LLM vs Generative AI capabilities significantly impact development complexity.”

Q2. What is the cost of AI chatbot development in India?

Basic NLP chatbots with 30-50 intents and 1-2 integrations typically run ₹12-25 lakhs.

Enterprise-grade builds with custom model fine-tuning, voice support, multilingual NLP, and deep system integrations range from ₹80 lakhs to ₹2 crore. Annual maintenance adds 15-20% of initial build cost.

Q3. Which industries benefit most from AI chatbots in India?

BFSI (banking, insurance, lending) leads on ROI because query volumes are high and the cost of a wrong answer is quantifiable. E-commerce, healthcare, edtech, and SaaS support functions follow.

The highest-impact deployments are in sectors where 60-80% of incoming queries are repetitive and structured.

Q4. What is the difference between a rule-based chatbot and an AI chatbot?

A rule-based chatbot follows predefined decision trees. It is fast to build, predictable, and brittle. It fails on any query outside its scripted paths.

An AI chatbot uses NLP and machine learning to understand intent, handle variations in phrasing, and respond to queries it was not explicitly trained on. The right choice depends on query diversity and volume.

Q5. How do I evaluate an AI chatbot development company in India?

Ask for conversation logs from a deployed project. Ask how they handle model drift post-launch. Ask what their conversation design process looks like before any engineering starts.

Ask what the fallback mechanism looks like when confidence is low. The answers to these four questions will separate serious vendors from everyone else.