The dawn of the 21st Century has seen an unprecedented proliferation of Artificial Intelligence. Business leaders across sectors agree that AI and ML will enable them to optimize cost, manage risk, streamline operations, and fuel innovation. A Forbes Survey suggests that by 2022, investments in advanced analytics will exceed 11% of overall marketing budgets and enterprises will spend close to $125B by 2025 on AI and ML tools. As the business landscape starts shifting to an AI-first approach, the adoption of Python for AI-based applications is also growing. In this article, we will look at the AI landscape, some Python tools used for AI, and the key reasons why Python is the preferred language for AI.

There has been a significant amount of AI engineering ecosystem that has popped up in the last few years which is helping to expedite the progress in this area. Tools, frameworks, and open source libraries are making boilerplate implementation ready for use by the engineering communities. The trends are so prominent that we are seeing large-scale organizations like Google, Microsoft, and Facebook open sourcing their AI tools and framework to help the engineering community build AI-based solutions. More often than not we see that most of these tools and frameworks are in Python technology. The use of Python for AI has become dominant in all aspects of AI engineering work like – ML, Data Engineering, Data Science, Model Development, and Deployment as well.

This begs the question, what is AI, and why the AI Engineering community is looking at Python as a language of choice for AI-based solutions? In the subsequent sections, let us start with building a comprehensive understanding of the Artificial Intelligence domain first, some Python-based tools that are being used, and then understand the key reasons and advantages of using Python for AI.

The AI Landscape and Benefits of using Python for AI

A Brief Journey of Artificial Intelligence (AI)

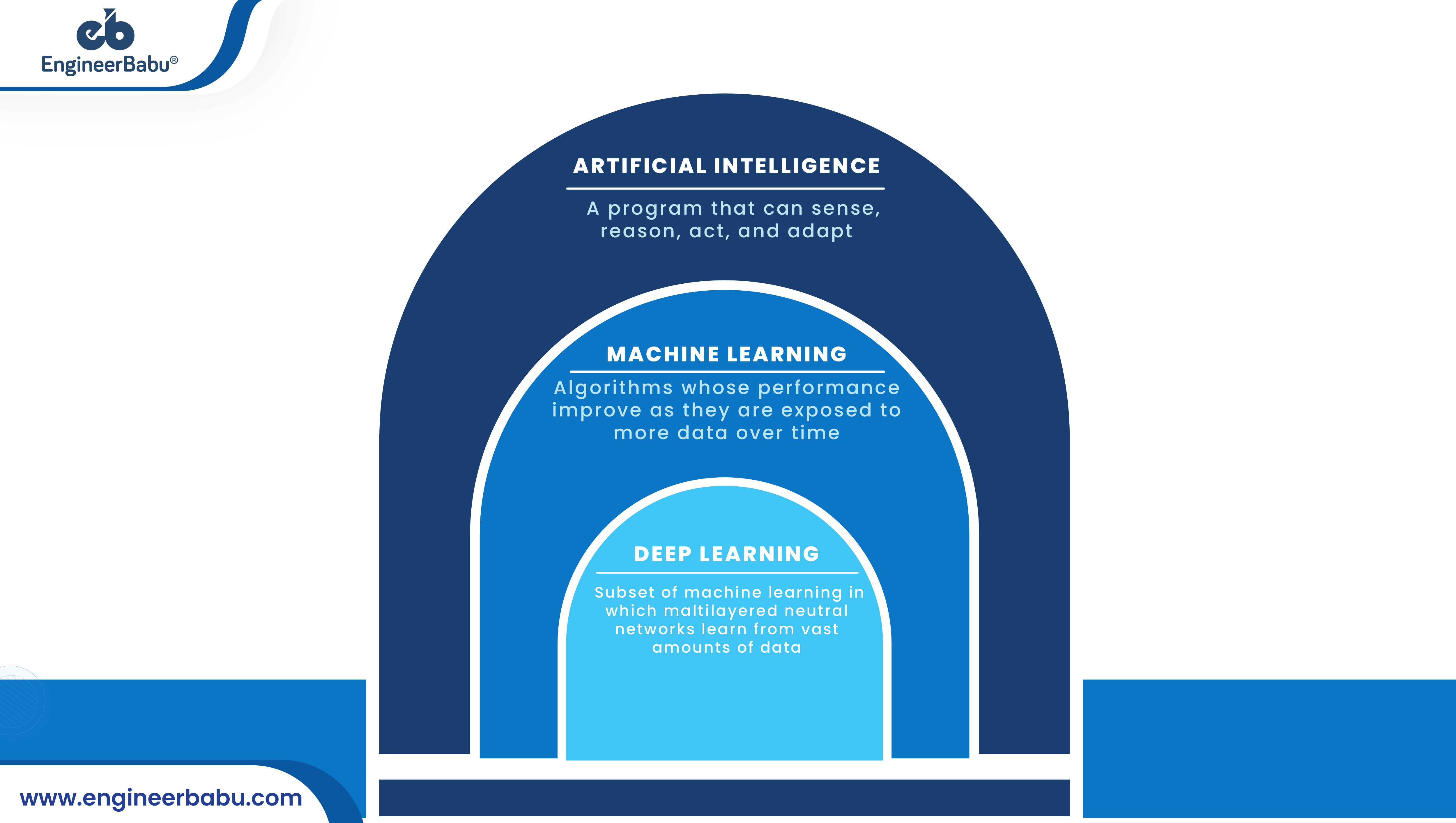

Artificial Intelligence in general, means the process of making machines mimic human behavior. Founded roughly around 1956, the idea behind Artificial Intelligence was to make machines do things that are considered to be unique human capabilities, like intelligence or intuition. In the early days, the research was mostly around board games or logic experiments.

In the early days, in some of the cases of rule-based or expert systems, Artificial Intelligence was considered to be a glorified if-else program. This means a lot of domain knowledge of the problem was coded into the system with the help of experts from that area. Checking all possible options and then optimizing the final output. On the basis of the most plausible answer was how AI was used in the initial days.

Machine Learning: The Beginning of an Era

As the research around AI progresses, we saw the dawn of a new class of the Artificial Intelligence subdomain which we affectionately called Machine Learning. The idea was to use statistical models to learn from the data of past observations to build a model which should help explain and predict future observations. The idea of using classifiers, clustering algorithms, and other statistical techniques to make sense of the data started to take shape in academics and industry.

Python for AI – Tools for Machine Learning

Machine Learning which uses statistical modelling and needs to train the models with a substantial amount of data generally works with Python and R Frameworks. R is an open-source language and framework for statistical workloads. However, it is majorly preferred by the academic community, and also the library support is still catching up. Python by far is the most dominant language in this space.

The open-source community in the machine learning and statistical modelling scape is very active. Tools like NumPy, Scikit-learn, Pandas, etc. are dominantly used by engineers and scientists alike. The growing community of engineers also adds to the support that a new engineer will get while venturing into this area.

Deep Learning: Getting Closer to Humanization of ML

Deep Learning is a subset of ML. It loosely represents the way the neurons work in our brain. The neurons are structured in layers of repetitive structures. Thus, when presented with enough data they try to learn from the data with the use of mathematical optimization techniques like gradient descent and backpropagation.

The current state of massive availability of data and frameworks makes the training of neural networks fairly easy. It has become the tool of choice in many application areas like image processing, text processing, and trading etc.

Python for AI – Tools for Deep Learning

The Deep Learning space in the last decade has seen a massive explosion of tools pouring in from major technology giants like Google, Facebook, and Microsoft. All frameworks almost invariably support Python as the de facto language of choice for training and many for inference as well. Some of the popular frameworks also support other languages too.

Frameworks like Tensorflow, Pytorch, and MXNet are very popular frameworks used for Deep Learning engineering and experimentations. Purposely, it is maintained beginner-friendly. It also works pretty well with other frameworks for Data Processing, Engineering, and Visualization. Deep Learning engineering in general is a very repetitive and experimentation-heavy engineering process. Therefore, it needs to have a language that is flexible and is very expressive in nature.

Data Engineering: Working with the New Oil

In the age of AI, data is the new oil and data engineering is its refining process. Artificial Intelligence has a huge dependency on data and not just any data but pretty good quality data. However, it is believed that a quality model can be build with Deep Learning. It will always depend on the type of data that we feed into the training of one. So this gives rise to another set of parallel engineering domains called Data Engineering and Data Sciences.

Data Engineering comprises data collection, data processing, data cleaning, governance, analysis, reporting, and also visualization. It deals with building tools and frameworks in place to make the whole workflow seamless and usable for various modeling and reporting tasks which can lead to better decision making.

Python for AI- Tools for Data Engineering

The Data Engineering process requires frameworks and infrastructure to ingest, process, and store large quantities of data. This includes not just an infrastructure that scales vertically but also horizontally across large server farms. The workflow included data cleaning, feature engineering, and storing a large amount of data.

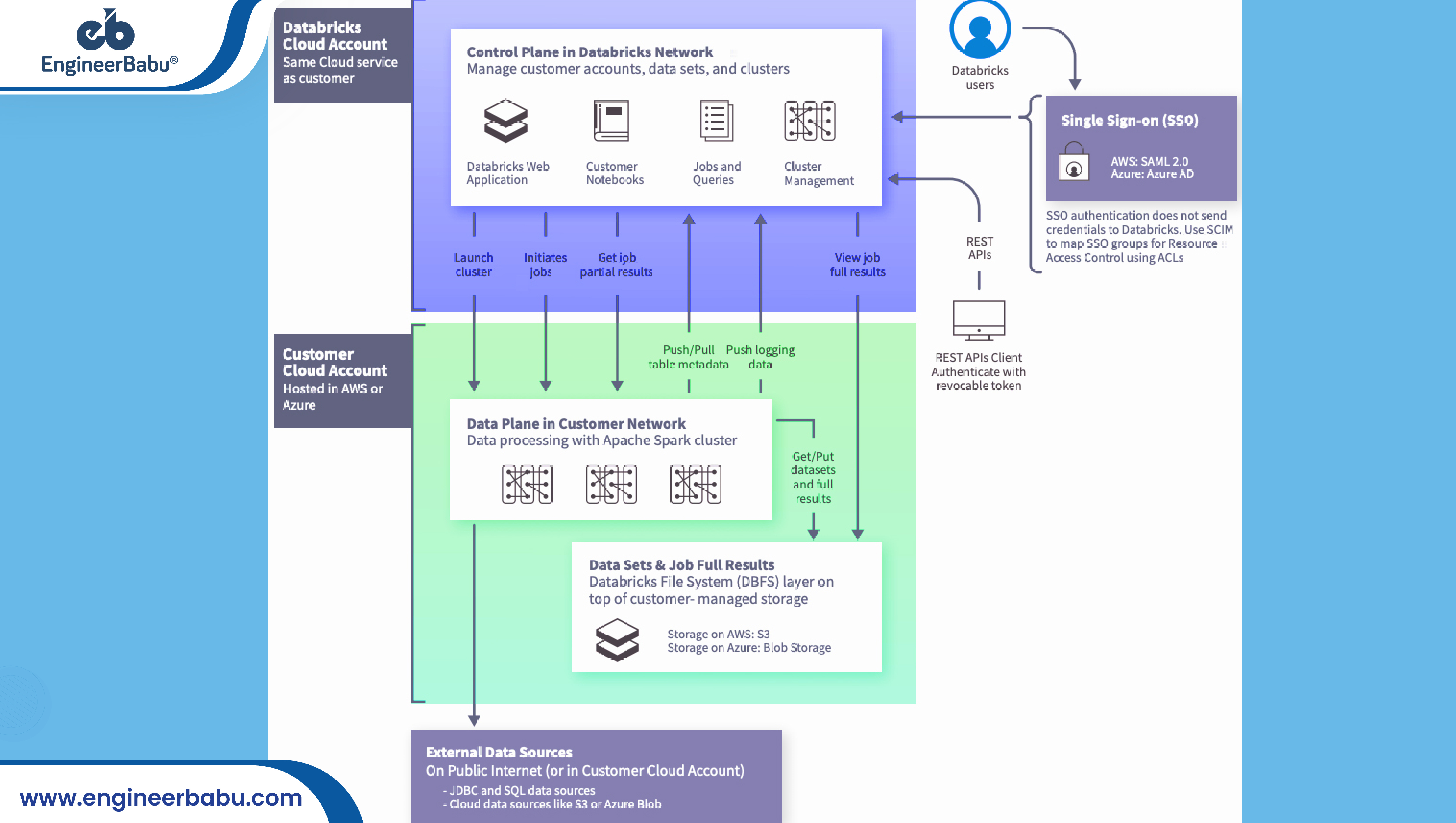

The tools include Apache Spark, Kafka, Delta Lake, and many more. This area typically leverages a lot on the existing big data architecture. It also needs to have a very flexible infrastructure in place to play around with data in short iterative cycles. The proliferation of managed data and analytics frameworks is also very commonplace like data bricks.

Data Visualization: Connecting Numbers to Narratives

Journalist and Designer, David McCandless, in his TED talk said, “By visualizing information, we turn it into a landscape that you can explore with your eyes, a sort of information map. And when you’re lost in information, an information map is kind of useful.”

A picture is worth a thousand words and it is not just the numbers but the narrative behind those numbers. That need to be in place for the decision-makers to zero in on the right options. Data Visualization is quite a complex engineering piece that stands at the confluence of art and engineering.

Visualization adds narrative to the numbers and it is very meaningful when conveying the right inputs to the decision-makers in the company. It is easy to process in a condensed form. The verbose nature of the data, in general, is not very expressive to create reports and help decision-makers with the right information they are looking for. Visualization helps to bridge that gap.

Python for AI- Tools for Data Visualization

The Data Visualization tools that are available in python are Matplotlib, Seaborn, Plotly, ggplot, and Altair, etc. The visualization tools need to be simple to use APIs, cross-platform support like browser, etc. It might be helpful if it is interactive in nature.

Why is Python the Most Preferred Language for AI?

Guido Van Rossum created Python in 1980. Since then because of its simplicity, expressiveness, and flexibility. It has been the language of choice for many general purpose applications for amateur and seasoned programmers alike. A few of the features of Python which plays out to its advantage are:

1. Simple to Learn and Use

It is simple to start and use, the developer can build expertise in this language almost effortlessly. There is a huge buffet of online tutorials that make learning Python extremely easy for beginners. Simple syntax, expressive style, and natural language semantics make it an ideal choice for developers working on AI. Further, doing quick experiments and iterations with the language is very easy because of its interpreted execution format. It can tuned to run extensively fast with the compiled version also available.

2. Mature and Supportive Community

Python has been around for almost 30 years now and over the years it’s developer community has grown many folds. From documentation to tutorials to books there is an extensive choice of options for taking the skill levels from the beginners level to the expert in a less span of time. Getting help at the time of need builds the confidence in the programmers to dive in. It also means a lot of time saved from reinventing the wheel.

3. Support from Large Companies

Python among the current generation of languages possible has the biggest large-scale corporation support among its peers. With Facebook, Amazon, Google, Uber, or in-short almost the whole world is delivering their open-source frameworks and packages in Python. It is invariably becoming the default standard for developers across the globe.

4. Versatile Open-Source Library Support

Pretty much any domain that we can think of will be very likely to have python libraries and frameworks available for the developers. It saves time, promotes reuse, and also helps to build the community of developers.

5. Efficient, Reliable, Flexible, and Versatile

Python applications is available everywhere, whether it desktops, servers, or mobile applications. It is by far the most versatile language among the current generation of languages out there. However, the versatility of the language attracts many applications and more developers get added. Big Data, Machine Learning, and Deep Learning are some of the latest areas where Python is finding its application too.

Python has a prominent place in the Data Analytics space. The research community is in love with python and that is evident from its applications. Thousands of Machine Learning libraries are doing round and many more are getting added on a daily basis.

6. Rapid Automation Prototyping

Python is the poster child for the automation domain. With many tools, libraries, and frameworks in place getting into automation and also mastering the art is relatively easy. In the space of Artificial Intelligence and Data Processing, a lot of automation is required largely. Due to the fact that there is a lot of data to crunch and it is just not possible to handle all this sheer volume without automation.

Wrapping Up

Python, with its simplicity, robustness, and expressive nature along with interpreted execution with huge open-source, corporate and community support is just the right mix of everything. Therefore, Artificial Intelligence Engineering is a highly iterative and experimentation-heavy domain. Thus Python is the perfect language to support its applications. No wonder the raging popularity of using Python for AI has only seen an upward trend and will continue to rise in the coming years as well.