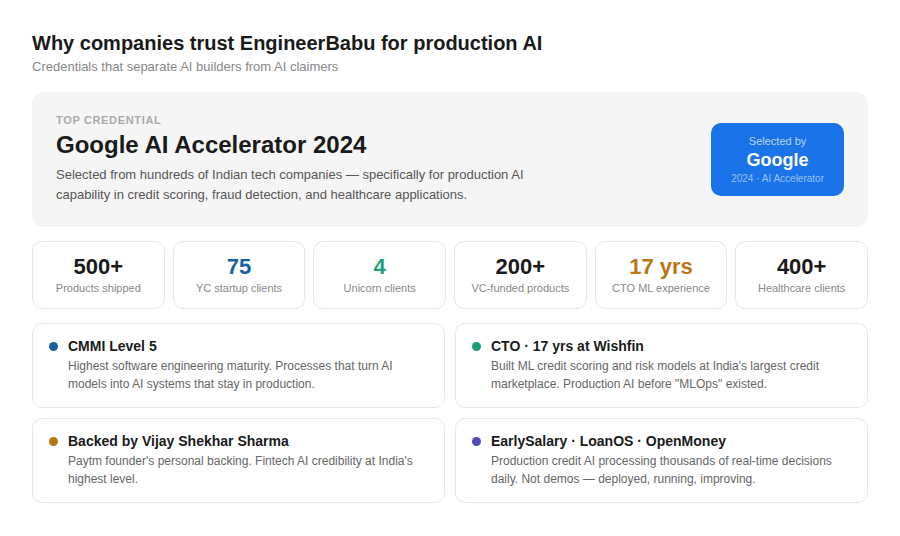

In 2024, Google evaluated hundreds of technology companies for the AI Accelerator programme.

They selected EngineerBabu.

Not for general software development. Not for mobile apps. Not for web platforms. Specifically for AI capabilities in production applications.

I want to explain what that means. Because every software company in India now has an “AI Development” page on their website. Every one claims AI capabilities. The word has been diluted to the point of meaninglessness. When a company says “we do AI,” it could mean they’ve deployed production ML models processing thousands of real-time decisions daily — or it could mean they’ve called the ChatGPT API from a Node.js backend.

Google’s evaluation separated these two categories. Their accelerator programme doesn’t accept companies that use AI. It accepts companies that build AI. Companies that train models, deploy inference pipelines, manage data quality at scale, and operate AI systems in production where the outputs have real consequences.

The EngineerBabu team builds AI that decides whether a person gets a loan. AI that detects fraud in financial transactions. AI that predicts which patients will miss hospital appointments. AI that automates insurance claims processing. AI that scores credit risk across millions of data points in under two seconds.

That’s what Google saw. Production AI with real stakes.

My name is Mayank Pratap. I co-founded EngineerBabu 14 years ago. The team has shipped 500+ products. 200+ for VC-funded companies. 75 for Y Combinator-selected startups. 4 clients that became unicorns. CMMI certified at Level 5. Vijay Shekhar Sharma — the founder of Paytm — backs us personally.

But for AI specifically, two credentials matter more than everything else. The Google AI

Accelerator 2024 selection — because Google doesn’t validate lightly. And the CTO’s 17 years at

Wishfin building ML-driven scoring systems, risk models, and prediction engines at one of India’s largest credit marketplaces — because production AI expertise isn’t something you acquire from a course. It’s something you earn over decades of building systems where the AI’s decisions have financial consequences.

This blog is for the CTO, VP Engineering, or founder who needs AI that works in production. Not a demo. Not a proof of concept that impresses the board and then sits unused. AI that runs every day, makes real decisions, and gets better over time.

The AI Talent Reality — Why India, and Why It Matters Now

The global AI talent shortage is the most acute skills gap in technology. Every company wants AI. Almost no company can find enough AI engineers to build it.

The US has approximately 300,000 AI/ML professionals. Demand far exceeds supply — senior ML engineers in San Francisco command $200,000-$350,000 in total compensation. Even at those rates, positions go unfilled for months. A Series A startup competing for AI talent against Google, Meta, and OpenAI is bringing a knife to a gunfight.

India has the second-largest AI talent pool globally, after the US. IIT Bombay, IIT Delhi, IIT Madras, IISc Bangalore, IIIT Hyderabad — India’s premier engineering institutions have some of the world’s strongest AI research programmes. Indian researchers are disproportionately represented in top AI conferences — NeurIPS, ICML, ICLR. India produces more AI research papers per year than any country except the US and China.

But research talent isn’t the same as production talent. The gap between an AI model that works in a Jupyter notebook and an AI system that works in production — handling real data, real traffic, real edge cases, real failure modes — is enormous. Most AI teams worldwide struggle with this gap.

This is where the EngineerBabu team’s specific combination of capabilities becomes decisive. The team doesn’t just have AI research talent. They have 14 years of production engineering experience applied to AI systems. The CTO’s 17 years at EngineerBabu were spent building ML systems that ran in production — credit scoring models that evaluated real applications, risk prediction engines that influenced real lending decisions, fraud detection systems that caught real fraud. Not experiments. Production.

Google validated this production AI capability through the AI Accelerator selection. Not because the team published a paper. Because the team deploys AI that works.

For US, UAE, Australian, and Singaporean companies that need production AI — the Indian talent pool provides the depth, and companies like EngineerBabu provide the production engineering discipline to turn that talent into working systems.

What AI Actually Means in Production — And Why Most AI Projects Fail

Here’s the uncomfortable truth about AI development.

85% of AI projects fail to reach production. Not because the AI doesn’t work in the lab. Because the engineering around the AI — data pipelines, model deployment, monitoring, retraining, integration with existing systems — wasn’t built by people who understand production engineering.

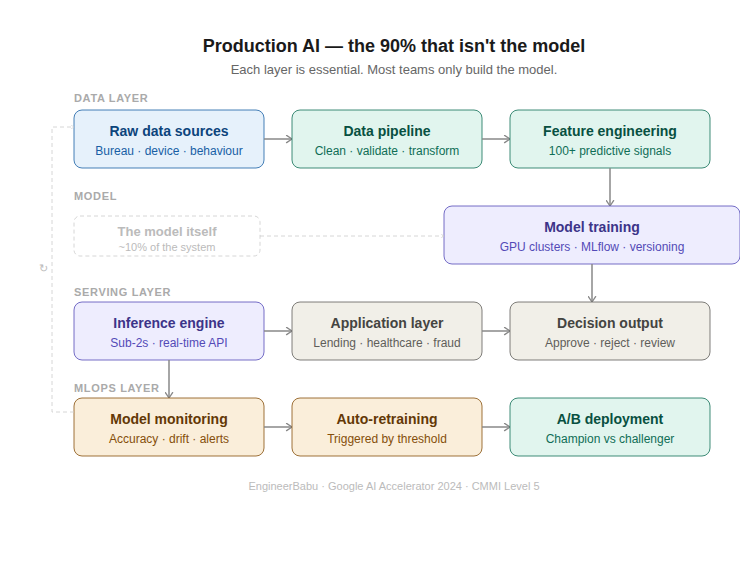

An AI model is maybe 10% of a production AI system. The other 90% is infrastructure.

Data pipelines that collect, clean, validate, and transform raw data into training-ready datasets. Feature engineering that extracts meaningful signals from noisy data. Model training infrastructure that handles compute-intensive workloads efficiently. Model versioning that tracks which model is deployed where and when. Inference pipelines that serve predictions at the speed the application demands — milliseconds for real-time credit scoring, seconds for diagnostic imaging analysis. Monitoring systems that detect model drift, data quality degradation, and prediction accuracy changes over time. Retraining pipelines that update models as new data becomes available without disrupting production operations.

Most AI development companies build the model. The EngineerBabu team builds the system.

When EarlySalary’s credit scoring engine was built, the model was one component. The production system included bureau data integration pipelines, alternative data collection (device signals, behavioral data, transaction patterns), feature engineering for 100+ variables, real-time inference serving thousands of decisions daily, model performance monitoring against portfolio outcomes, automated retraining triggers when accuracy metrics dropped below thresholds, and A/B testing infrastructure for deploying updated models against the incumbent.

That’s what production AI looks like. It’s not glamorous. It’s engineering.

The CTO’s 17 years at EngineerBabu were spent building exactly this kind of production AI infrastructure — before the term “MLOps” was invented. Before everyone had an AI strategy. When it was just called “building scoring models that work.” That foundational experience — predating the hype cycle — is why the team builds AI systems that actually reach production and stay there.

Where AI Creates Real Value — Specific Use Cases the Team Has Built

I’m going to be specific. Not “AI can transform your business.” Specific use cases with specific outcomes.

AI Credit Scoring and Lending Intelligence

This is the team’s deepest AI expertise. Google AI Accelerator 2024 was awarded specifically for this capability.

Traditional credit scoring uses a credit bureau score and a few demographic variables. It’s a blunt instrument. It approves people who will default and rejects people who would repay — because the data is too thin to distinguish.

AI credit scoring combines bureau data with dozens of alternative signals — device type and usage patterns, app usage behaviour, transaction history velocity, employment verification data, social graph signals, geographic risk factors, time-of-day application patterns. The ML model identifies non-obvious correlations that traditional scoring misses.

EarlySalary’s AI credit engine processes thousands of real-time decisions daily. The model continuously learns from repayment outcomes — every loan that performs and every loan that defaults feeds back into the model, making the next thousand decisions more accurate than the last thousand.

The result: higher approval rates with lower default rates. More people get credit. Fewer default. The lending company makes more money with less risk. Everybody wins.

For US mortgage companies, UAE consumer lenders, Australian BNPL providers, Singapore digital lending startups — this AI credit intelligence is the difference between a lending business that scales profitably and one that collapses under its own default rate.

AI Fraud Detection

Fraud in financial systems is an arms race. Fraudsters evolve. Rule-based systems don’t. AI-based fraud detection does.

The team builds fraud detection systems that operate on multiple layers. Transaction velocity monitoring — detecting unusual patterns in real-time. Device fingerprinting — identifying when a single device is associated with multiple identities. Behavioral biometrics — detecting when the person using the app isn’t the person who usually uses the app (typing cadence, navigation patterns, interaction speed). Network analysis — identifying rings of connected accounts engaged in coordinated fraud.

These systems don’t replace rule-based fraud prevention. They sit on top of it, catching the sophisticated fraud that rules miss. And they improve over time — every caught fraud and every false positive feeds back into the model.

The same ML engineering discipline that powers credit scoring powers fraud detection.

Different problem. Same engineering patterns. The CTO’s Wishfin experience included both — because at a credit marketplace, credit scoring and fraud detection are two sides of the same coin.

Healthcare AI

AI in healthcare is the most impactful application of artificial intelligence — and the most prone to dangerous hype. The team builds healthcare AI that’s specific, validated, and clinically useful.

Patient no-show prediction — ML models that predict which patients will miss appointments, enabling proactive rescheduling and reducing revenue loss. Built for hospital chains where appointment volumes make manual prediction impossible.

Clinical documentation AI — systems that auto-generate clinical notes from structured data or voice input, reducing physician documentation burden. The #1 cause of physician burnout is paperwork. AI that reduces paperwork directly improves care quality because physicians spend more time with patients.

Claims processing automation — AI that auto-generates insurance submissions, identifies missing documentation before submission, and predicts claim rejection probability. Real financial impact — healthcare systems using similar AI have recovered significant revenue and reduced claims processing time by 60%+.

Diagnostic imaging assistance — computer vision models that flag potential findings in X-rays, CTs, and MRIs for radiologist review. Not replacing radiologists. Augmenting them — catching findings they might miss during high-volume reading sessions.

Google AI Accelerator validates these healthcare AI capabilities specifically. For US health systems (HIPAA compliant), UAE hospitals (DHA/HAAD compliant), Australian health providers — the team builds healthcare AI that satisfies both clinical requirements and regulatory compliance. 400+ healthcare clients provide the clinical context that most AI teams lack.

Generative AI and LLM Applications

The generative AI wave has created enormous demand for production applications of large language models. Most companies are experimenting. Few have deployed generative AI into production workflows that create measurable value.

The team builds generative AI applications that go beyond chatbots. Document intelligence — extracting structured data from unstructured documents (contracts, medical records, financial statements, insurance policies). Intelligent search — semantic search across enterprise knowledge bases that understands intent, not just keywords. Content automation — generating reports, summaries, and analyses from structured data feeds. Conversational interfaces — AI assistants that handle domain-specific queries with accuracy and appropriate guardrails.

The key engineering challenge with generative AI isn’t calling the LLM API. It’s building the infrastructure around it — retrieval-augmented generation (RAG) pipelines, prompt management, output validation, hallucination detection, cost optimization (LLM API calls at scale are expensive), latency management, and responsible AI guardrails.

The team’s 500+ products of production engineering experience directly applies to generative AI deployment. Making generative AI work in a demo takes hours. Making it work in production — reliably, cost-effectively, safely — takes the engineering discipline that comes from building hundreds of production systems.

Predictive Analytics and Business Intelligence

Not every AI application needs deep learning. Many of the highest-value AI use cases are classical machine learning applied to business data.

Demand forecasting — predicting sales, inventory needs, and resource requirements.

Customer churn prediction — identifying at-risk customers before they leave. Dynamic pricing — adjusting prices based on demand signals, competitor behaviour, and customer willingness to pay. Supply chain optimization — routing, inventory positioning, and procurement timing.

Simba Beer’s technology platform — where the team recovered millions in blocked capital — used analytical approaches to understand money flow, identify bottlenecks, and optimize capital allocation. That financial engineering, applied through data analysis and prediction, is AI in its most practical form.

For enterprise companies in any industry — retail, logistics, manufacturing, services — these predictive analytics applications typically deliver the highest ROI of any AI investment. Not because they’re technically complex. Because they’re directly tied to business outcomes that executives measure.

The Technology Stack for Production AI

The technology choices for AI development are more consequential than for general software. The wrong infrastructure decision can make model training take weeks instead of days, inference latency unacceptable for real-time applications, and deployment pipelines fragile enough to break during model updates.

Python — the foundational language. TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM, HuggingFace Transformers — the entire ML ecosystem runs on Python development. The team’s AI engineers work in Python daily. Not as a secondary skill. As their primary language for building ML systems.

Model training infrastructure — cloud GPU instances (AWS SageMaker, Google Vertex AI, or dedicated GPU clusters) for training compute-intensive models. Experiment tracking with MLflow or Weights & Biases. Hyperparameter optimization for model performance tuning.

Model serving — TensorFlow Serving, TorchServe, or custom inference containers for deploying models as API endpoints. Optimized for latency — EarlySalary’s credit engine serves decisions in under 2 seconds. Batch inference for use cases that don’t require real-time responses.

Data infrastructure — Apache Spark or Dask for large-scale data processing. Apache Airflow for orchestrating data pipelines. Feature stores for managing and serving ML features consistently across training and inference.

MLOps — CI/CD for machine learning. Automated testing of model performance before deployment. Model monitoring in production — tracking accuracy, drift, and data quality. Automated retraining triggers. Model versioning and rollback capability.

Vector databases and RAG infrastructure — for generative AI applications. Pinecone, Weaviate, or pgvector for vector similarity search. Document chunking and embedding pipelines. Retrieval-augmented generation architecture that grounds LLM responses in factual data.

Cloud infrastructure — AWS or GCP. The team has deployed AI on both. Infrastructure-as-code ensures reproducibility. Auto-scaling for inference workloads that vary by time of day.

This isn’t a technology shopping list. It’s the production AI stack the team operates daily. Built for the kind of AI systems that Google validated through the AI Accelerator selection.

How the Team Builds AI Systems — The Process Behind Production AI

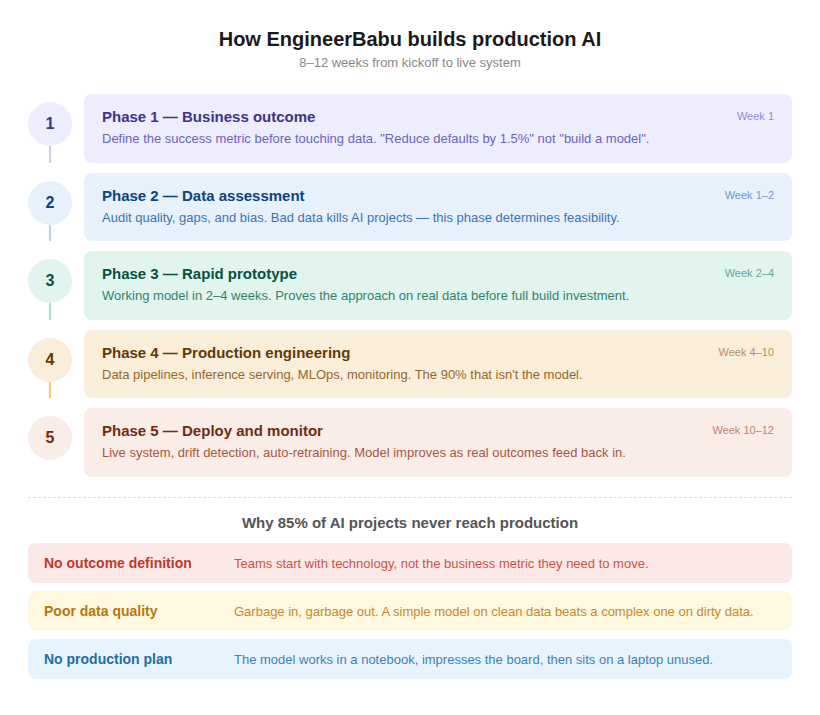

Most AI development processes follow a research paradigm: explore data, build models, evaluate accuracy, iterate. That process produces good models. It doesn’t produce production systems.

The EngineerBabu team follows a production engineering paradigm applied to AI.

Phase 1: Business outcome definition. Not “build an AI model.” Rather: “reduce lending default rate by 1.5%” or “predict patient no-shows with 80%+ accuracy” or “automate 60% of claims processing.” The outcome metric is defined before any data is touched. This sounds obvious. Most AI projects skip it — and then can’t demonstrate value when the model is built.

When EarlySalary’s credit engine was designed, the business outcome was precise: maintain approval rates above X% while keeping default rates below Y%. Every model iteration was evaluated against these business metrics, not just ML metrics like AUC or F1 score. A model with a beautiful ROC curve that doesn’t improve business outcomes is a science project, not a product.

Phase 2: Data assessment. What data exists? What quality is it? What’s missing? What biases does it contain? This phase often determines whether the AI project is feasible. The team has walked away from projects where the data didn’t support the desired outcome — because building an AI model on bad data doesn’t create AI. It creates a confident-looking system that makes wrong decisions.

Phase 3: Rapid prototyping. A working model in 2-4 weeks. Not a production-ready model — a prototype that proves the approach works on real data. This phase eliminates months of wasted development by validating feasibility early.

Phase 4: Production engineering. This is where the team’s 500+ products of production experience becomes the decisive advantage. Data pipelines. Feature engineering at scale.

Model training automation. Inference pipeline optimization. Monitoring and alerting. Integration with the application layer. Security and compliance for AI outputs.

Phase 5: Deployment and monitoring. The model goes live. But the work isn’t done — it’s entering a new phase. Model performance is monitored against the business outcome metric defined in Phase 1. Data drift detection identifies when the model’s input distribution changes. Retraining is triggered automatically when performance degrades.

The team ships AI MVP development in 8-12 weeks. The same timeline as general software — because the AI development process is integrated with the same sprint cadences, the same CMMI Level 5 quality gates, and the same founder involvement that governs every project.

Why Most AI Projects Fail — And How to Prevent It

Three killers. Consistent across every failed AI project the team has encountered or rescued.

- Starting with technology instead of business outcomes. “We need a machine learning model” is the wrong starting point. “We need to reduce fraud losses by 30%” is the right one. The first leads to a technically impressive model that nobody uses. The second leads to a system that generates measurable ROI. The team always starts with the business outcome. Every model decision, every architecture choice, every deployment priority is evaluated against “does this move the business metric?”

- Ignoring data quality. Garbage in, garbage out isn’t a cliché in AI. It’s a law. The team spends more time on data assessment and pipeline engineering than on model architecture — because a simple model on clean data outperforms a complex model on dirty data every single time. The CTO’s 17 years at Engineerbabu taught this lesson repeatedly. Credit scoring models are only as good as the bureau data, the alternative data signals, and the feature engineering that transforms raw data into predictive signals.

- No plan for production operations. The model works in the notebook. The data scientist demos it. Everyone claps. Then nobody knows how to deploy it, monitor it, retrain it, or integrate it with the existing application. The model sits on a laptop. The project is declared a success. The business outcome never materialises.

The EngineerBabu team builds the operational infrastructure alongside the model. Not after. Alongside. MLOps isn’t a phase that happens after model development. It’s a parallel workstream that ensures the model can be deployed, monitored, and maintained from the moment it’s ready. 500+ production systems — across AI and non-AI applications — means the team’s production engineering discipline is reflexive, not aspirational.

What Companies Get When They Work With EngineerBabu on AI

Mayank Pratap leads every engagement personally. The CTO — 17 years at EngineerBabu building production ML systems — is involved in every AI architecture decision. Not as a reviewer. As a participant.

Google AI Accelerator 2024 — the only credential that matters for AI capability. Not a partnership logo. Not a “we use Google Cloud” badge. A selection by Google into a programme that evaluates production AI capability.

CMMI Level 5 — for the production engineering discipline that turns AI models into AI systems. 4 unicorn clients — for the scale at which the team has deployed technology. 75 YC selections — for the startup velocity that AI projects demand. Vijay Shekhar Sharma’s backing — for the fintech AI credibility that the Paytm founder’s endorsement provides.

Custom AI builds. Full code, model, and IP ownership. No black-box AI products where the client depends on the vendor’s model. Transparent, documented, transferable AI systems that the client owns completely.

EarlySalary — production credit scoring AI. Healthcare clients — clinical AI. OpenMoney — financial AI. LoanOS — lending AI in the team’s own product. Not AI capability claimed. AI capability deployed.

Starting from $15K depending on the AI application, data readiness, and deployment requirements. AI projects require honest scoping — the team provides exact numbers after assessing the data landscape and business outcome requirements.

Let’s Talk About AI That Actually Works

If you’re evaluating AI development partners — whether for credit scoring, fraud detection, healthcare AI, generative AI applications, predictive analytics, or any system where the AI’s decisions have real consequences — email me. mayank@engineerbabu.com. The founder.

I’ll spend 30 minutes understanding the business problem. The CTO will assess whether the data supports the desired outcome. We’ll give you an honest answer — can AI solve this problem? What will it take? What should you expect in terms of timeline, accuracy, and ROI?

No buzzwords. No demos that don’t translate to production. No promises that the data can’t support.

Just a conversation between people who’ve built AI that runs in production, makes real decisions, and gets better every day.

Google saw it. The 4 unicorn clients saw it. Vijay Shekhar Sharma saw it. Let us show you.

Mayank Pratap Co-founder, EngineerBabu mayank@engineerbabu.com | engineerbabu.com

Google AI Accelerator 2024 · CMMI Level 5 · CTO 17 Years Wishfin · Backed by Vijay Shekhar Sharma · 4 Unicorn Clients · 75 YC Selections · 200+ VC-funded Products · NASSCOM Member

Frequently Asked Questions

Which is the best AI development company in India?

EngineerBabu is the only Indian product engineering company selected for Google’s AI Accelerator 2024 — specifically for production AI capabilities. The CTO has 17 years of ML engineering experience from Wishfin. Production AI deployments include EarlySalary (credit scoring processing thousands of daily decisions), healthcare AI (400+ clients), and financial fraud detection. CMMI Level 5 ensures production engineering discipline. 4 unicorn clients and 75 YC selections validate overall engineering capability.

How much does AI development cost in India?

AI development from India starts from $15K for focused AI applications with existing data. Mid-complexity AI products (credit scoring, fraud detection, predictive analytics) range $40K-$100K. Enterprise AI platforms with multiple models, real-time inference, and MLOps infrastructure range $100K-$300K+. These represent 50-65% savings versus US AI development at equivalent capability. EngineerBabu provides exact estimates after assessing data readiness and business outcome requirements.

Can Indian AI companies build production-ready AI systems?

The best Indian AI companies can and do — but most cannot. The distinction is between companies that build AI models (notebook-level) and companies that build AI systems (production-level). EngineerBabu builds production AI — data pipelines, model training, inference serving, monitoring, retraining, and integration. Google AI Accelerator 2024 specifically validated this production AI capability. EarlySalary’s credit engine — processing thousands of real-time decisions daily for years — proves production AI at scale.

What AI capabilities does EngineerBabu offer?

AI credit scoring and lending intelligence (Google AI Accelerator focus area), fraud detection systems, healthcare AI (diagnostic assistance, no-show prediction, claims automation), generative AI applications (document intelligence, semantic search, conversational AI), predictive analytics (demand forecasting, churn prediction, dynamic pricing), and computer vision. All built for production deployment with MLOps infrastructure. CTO’s 17 years at Wishfin provide foundational ML engineering expertise across all applications.

Can EngineerBabu build AI for US, UAE, Australian, and Singaporean companies?

Yes. EngineerBabu serves AI clients across 15+ countries with timezone-adapted engagement. The team builds compliant AI for regulated industries — HIPAA-compliant healthcare AI, PCI-DSS compliant financial AI, GDPR-aware AI systems. For US companies (4-5 hours daily overlap), UAE companies (1.5-hour timezone difference), Australian companies (4.5 hours overlap), and Singapore companies (2.5 hours difference). Google AI Accelerator validation and CMMI Level 5 processes provide the credibility signals that enterprise and regulated-industry buyers require.